Introduction

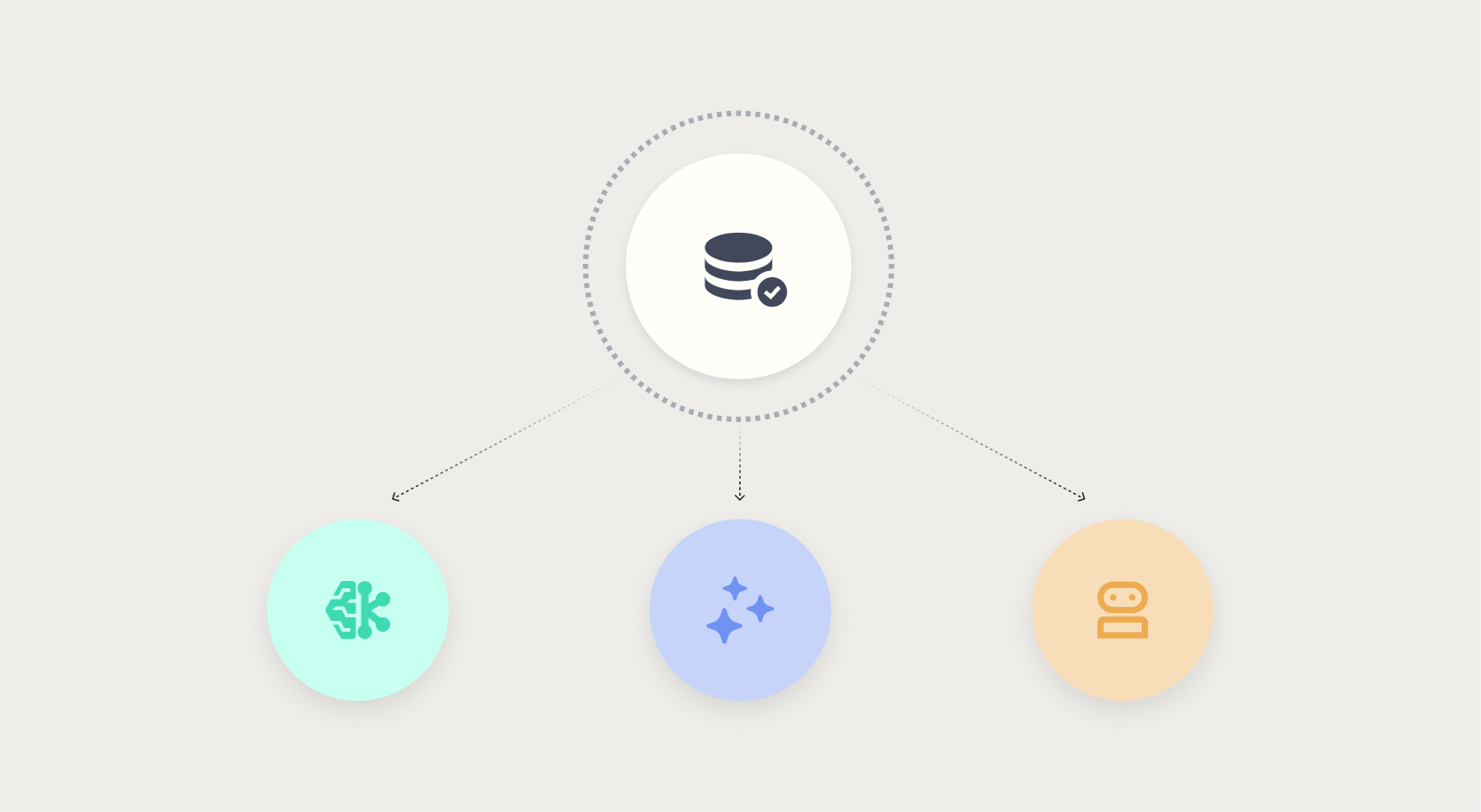

Nobody builds bad models on purpose, but many AI initiatives falter not because of algorithm complexity but because of invisible data defects. In the rush to deploy machine learning, generative AI, or agentic systems, organizations often treat data quality as an afterthought — until a chatbot confidently delivers a wrong answer or an autonomous agent commits budget on incomplete information. This article uncovers the top ten data quality traps that can silently sabotage your AI projects, with practical insights on how to spot and fix them before they cause real damage.

1. The Illusion of Clean Data in Traditional ML

In traditional machine learning, data quality failures are at least visible: a model’s accuracy drops, a dashboard shows an anomaly, or an analyst catches an outlier. Because these systems predict rather than act, errors can be corrected through retraining. This creates a dangerous false sense of security — teams assume that cleaning data once is enough. In reality, data decays over time, and a dataset that looked pristine at the start can silently corrupt predictions as domain context shifts. The lesson: never assume static cleanliness; implement continuous monitoring.

2. Generative AI Hallucinations from Stale Knowledge Bases

Generative AI models that pull from knowledge bases are only as good as the data feeding them. When that data becomes outdated or incomplete, the model doesn’t flag an error — it generates a confident-sounding wrong answer. A customer-facing chatbot might quote old policies or invent facts, eroding trust with every response. Unlike traditional analytics where a bad number stands out, a convincing hallucination blends seamlessly into conversation. The fix involves rigorous freshness checks, versioning, and feedback loops that flag suspicious outputs for human review.

3. Agentic AI Actions Based on Incomplete Supplier Data

Agentic AI systems — autonomous agents that make decisions and execute actions — amplify data risks dramatically. Consider a procurement agent trained to negotiate supplier contracts. If its training data lacks complete price histories or omits key terms, the agent might commit budget based on partial information, with no human oversight until the damage is done. These failures are insidious because the AI operated exactly as designed, but on data never fit for purpose. Organizations must embed data quality gates into every step of an agent’s decision pipeline.

4. Data Drift and Model Decay Over Time

Data drift occurs when the statistical properties of input data change after deployment, causing model predictions to degrade. For instance, a fraud detection model trained on pre-pandemic transactions may fail as consumer behavior shifts. Drift can be gradual or abrupt, but it always masks itself as normal variation until a critical error erupts. Differentiating drift from valid change requires automated monitoring tools that track feature distributions and alert teams when thresholds are breached. Without this, your model may be living in the past while the world moves on.

5. Incomplete Data Points That Skew Training Sets

Missing values are a classic data quality issue, but they’re often handled poorly — filled with averages, dropped entirely, or ignored. In generative AI, incomplete training examples can force the model to invent plausible but false completions. In tabular ML, missing fields can bias feature importance. Worse, the pattern of missingness itself might correlate with real-world factors (e.g., high-income customers more likely to skip survey fields). Smart imputation and robustness checks are essential to prevent incomplete data from poisoning your pipeline.

6. Inconsistent Data Across Multiple Sources

When AI systems combine data from different databases, APIs, or departments, inconsistencies multiply. One source might use “USD” for currency, another “$,” and a third “US Dollar.” A generative report that pulls from all three will produce garbled or misleading output. In agentic AI, inconsistent units can cause agents to misinterpret price ranges or delivery times. Standardizing formats, units, and semantics through a common data layer is non-negotiable — and continuous validation ensures that integration doesn’t introduce silent errors.

7. Labeling Errors in Supervised Learning Datasets

In supervised learning, the model learns from labeled examples — so a mislabeled image or misclassified text directly corrupts the outcome. A medical diagnosis model trained on X-rays with incorrect labels could fail to detect cancer. The cost of relabeling is high, but the cost of bad predictions is higher. Crowdsourced labels or automated labeling tools often introduce noise; human-in-the-loop verification and adversarial checks help surface systematic errors before the model ships. Treat labeling as a first-class data quality process.

8. Data Bias That Perpetuates Unfair Outcomes

Bias in training data leads to models that discriminate, even when the algorithm is neutral. Historical data may underrepresent certain groups or over-represent others. For generative AI, biased text corpora can reproduce stereotypes. For agentic systems, biased training could result in unfair resource allocation. The challenge is that bias is often not obvious until the model is live. Proactively auditing data for representation, implementing fairness constraints, and testing on diverse slices are critical steps to avoid reputational and legal fallout.

9. Security and Privacy Vulnerabilities from Bad Data

Poor data quality doesn’t just affect accuracy — it poses security risks. Corrupted or malicious data injected into training pipelines can create backdoors in generative models, enabling prompt injections or data exfiltration. In agentic AI, unclean data may expose sensitive user information inadvertently. Data that is incomplete in security fields (like access logs) can conceal breaches. Robust data validation must include integrity checks, source provenance, and confidentiality assessments to ensure your AI doesn’t become a liability.

10. How to Build a Data Quality Culture in AI Teams

Mitigating these pitfalls requires more than tools — it demands a shift in mindset. Start by embedding data quality checks into the CI/CD pipeline for AI, with automated validation for schema, freshness, and distribution shifts. Establish clear ownership: assign data stewards for each data source. Foster collaboration between data engineers, ML engineers, and domain experts. Use dashboards that surface quality metrics in real time. Most importantly, treat data quality as an ongoing practice, not a one-time cleanup. Only then can your AI systems reliably move from prediction to action.

Conclusion

Data quality is the silent foundation of any successful AI initiative — whether you’re building a regression model, a generative chatbot, or an autonomous agent. The failures described here are not hypothetical; they are happening today in organizations of all sizes. By recognizing these ten hidden pitfalls and proactively addressing them, you can prevent costly derailments and build AI that earns trust. Remember: clean data doesn’t happen by accident. It requires vigilance, collaboration, and a commitment to quality at every stage of the AI lifecycle.